Robustness Beyond Known Groups with Low-rank Adaptation

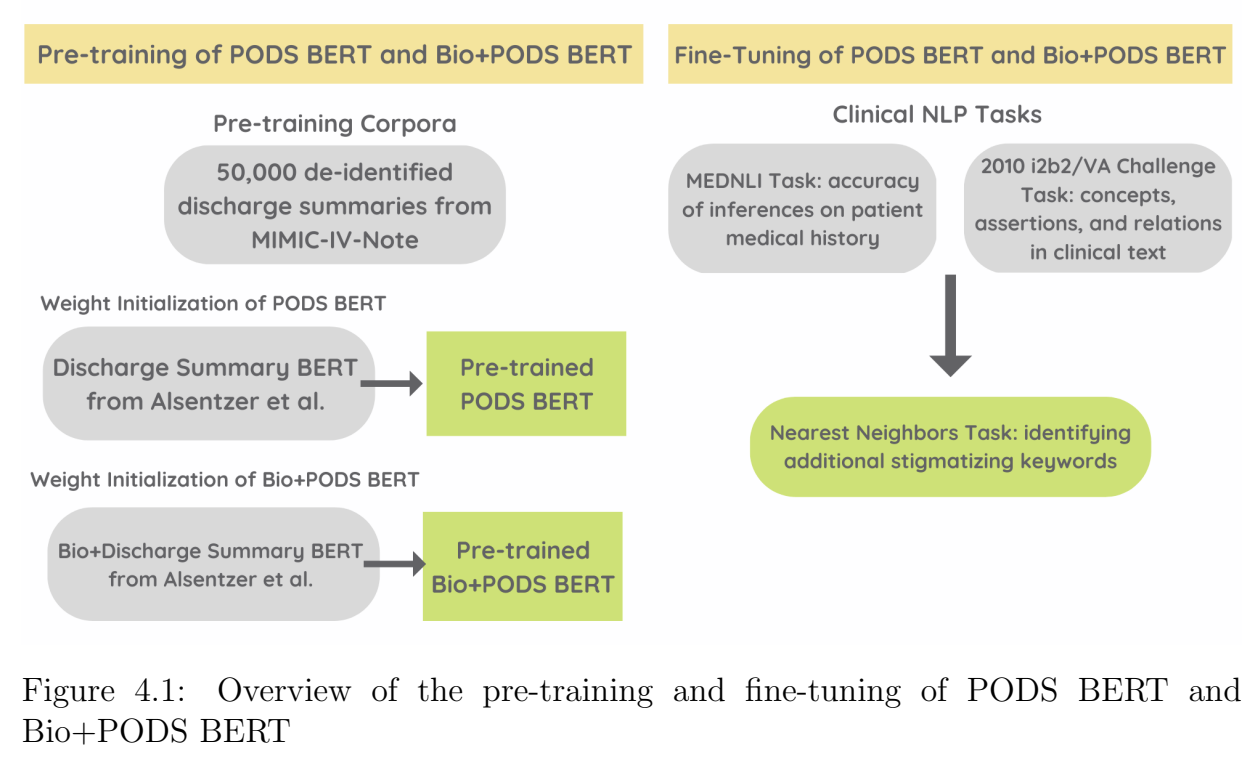

Hello! I am a PhD student in MIT LIDS, working on trustworthy machine learning with interests in high-stakes domains like healthcare. I'm lucky to be co-advised by Professors Marzyeh Ghassemi and Collin Stultz. In 2023, I graduated from Princeton University with a B.S.E. in Operations Research and Financial Engineering, along with minors in Cognitive Science, Computer Science, Linguistics, and Statistics & Machine Learning. My senior thesis, supervised by the incredible Professor Christiane Fellbaum, explored NLP techniques for detecting and editing stigmatizing language in medical records.

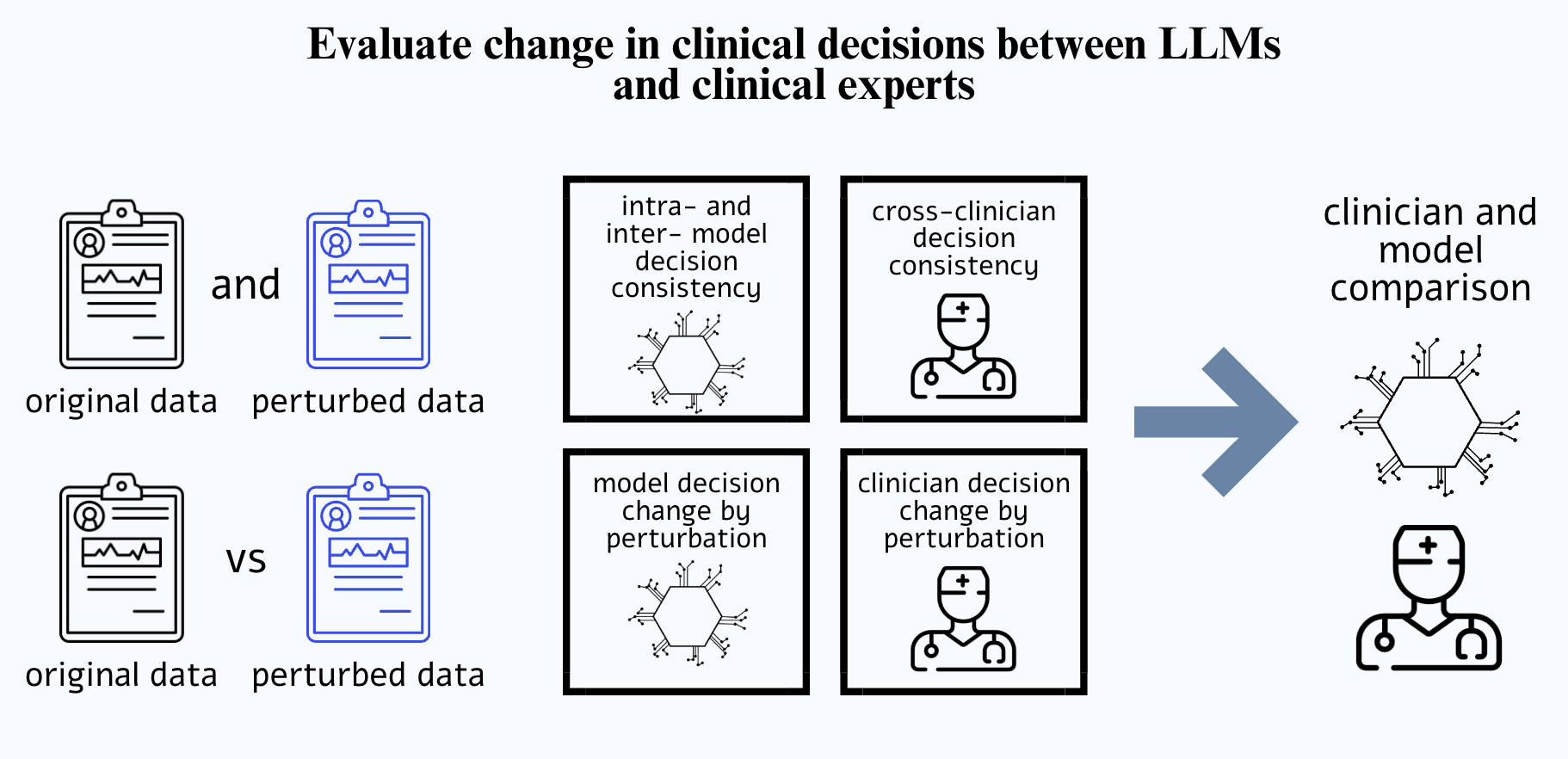

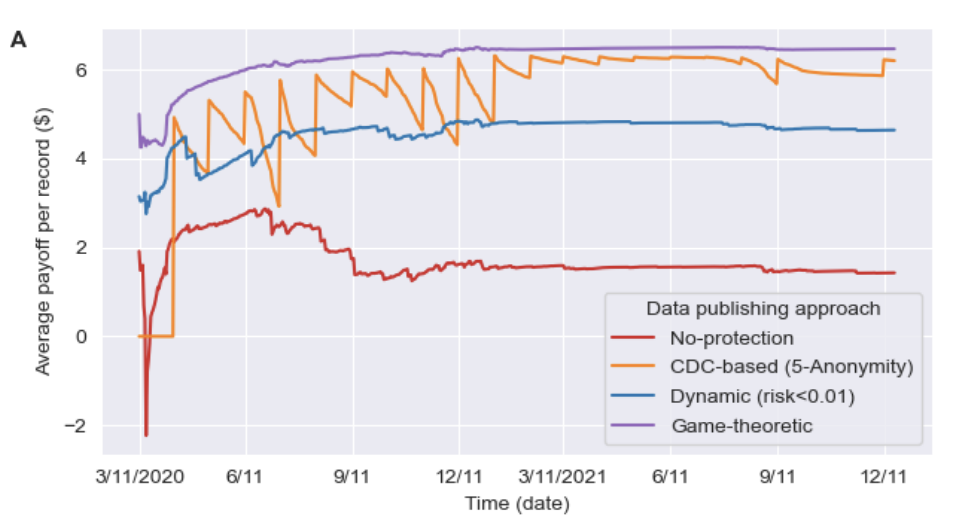

My research focuses on reliable, responsible, and trustworthy Machine Learning, and my work spans group robustness, GenAI Agents, chain-of-thought, and backdoor attacks. I use tools and frameworks from Optimization, Probability, and Statistics. My work has been published at venues such as ACM FAccT and IEEE.

This past summer, I interned at IBM Research, where I investigated inverted model outputs to identify gaps in reasoning chains and improve hallucination detection for large language models.

You can reach me at abinitha@mit.edu. I'd love to hear from you!